Capability is churned together, Distribution is decided above, Readiness is the only Asymmetric Advantage

There is a moment in every emerging technology cycle when the ground shifts beneath an entire industry in a single afternoon. On April 7, 2026, Anthropic announced “Mythos”—a frontier AI model representing such a profound step-change in cybersecurity capabilities that the lab deemed it too risky for public release. Instead of open market access, Anthropic convened a private consortium of over forty consequential tech organizations, granting them exclusive early access to hunt and patch vulnerabilities before the rest of the ecosystem could see the tool. The news traveled in hours. And somewhere in the quiet of a Monday morning, an AI security manager read the headline and had to decide what, exactly, to do about it.

Most of the industry’s response to moments like the Mythos drop is instinctive. Get into the consortium. License the tool. Match attacker speed with defender speed. It is the reflex of a profession that has spent three decades learning to move fast because slow has always meant loss. And in a narrow technical sense, the reflex is not wrong.

But it is not the discipline the moment requires. The orchestration of Mythos—where a central authority decides who gets access to a frontier capability and on what terms—is exactly the kind of ecosystem shift the ISACA Advanced in AI Security Management (AAISM) body of knowledge is quietly, insistently, trying to teach us to navigate.

The Trading Floor That Isn’t

The obvious analogy for AI-accelerated cyber is high-frequency trading. When algorithms collapsed trade execution from seconds to microseconds, strategy ceased to matter the way it had; the differential became the algorithm itself. Experience, judgment, market feel — all were commoditized by a structural change in the speed at which decisions could be made. The winner was the one who deployed the new capability first, and everything else became a level playing field.

It is a seductive analogy for AI in security. If frontier models can discover vulnerabilities faster than human teams can patch them, then the organization that deploys defensive AI first wins, and the organization that hesitates loses. The capability race becomes the whole game.

The analogy survives until one asks what, exactly, is being traded. HFT operates in a closed, fungible, zero-sum system — one asset class, one clearing mechanism, counterparties playing by identical exchange rules. Cybersecurity is none of those things. An enterprise estate is a heterogeneous portfolio of business processes, regulated data, third-party dependencies, human workflows, contractual obligations, and legacy systems under change freeze. Speed is one vector in that portfolio. It is not the vector.

The technologist’s error is to assume that when a new accelerant enters the system, everything else levels. In truth, almost nothing levels. Risk appetite does not level — a regional hospital and a trading firm have radically different tolerance for false positives from an autonomous scanner hitting production. Regulatory posture does not level — deploying offensive-AI tooling against one’s own infrastructure has implications under the EU AI Act, dual-use export controls, and sectoral obligations that vary by jurisdiction. Third-party contracts do not level — you may not have the legal right to probe SaaS components your business depends on. Governance authority does not level — who in your organization is actually empowered to authorize running a frontier offensive capability against the crown jewels, and who owns the residual risk if something breaks?

HFT teaches the wrong lesson about AI security not because speed is irrelevant, but because the real lesson of HFT was never about speed. The real lesson is that unbounded speed produces systemic fragility, and the markets that survived were the ones that governed speed through circuit breakers, kill switches, pre-trade risk checks, and structural rules. The speed advantage was never eliminated; it was absorbed into an architecture that made the system resilient to it. That is the AAISM thesis in one sentence: when everyone is about to sprint, the winner is often the one who built the best shock absorbers.

A Churning Older Than Markets

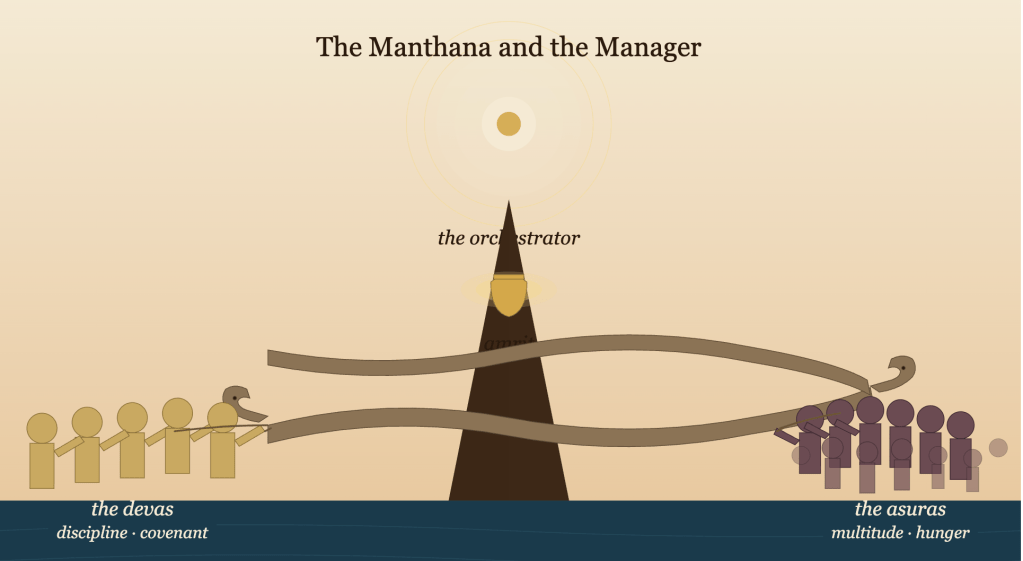

There is an older story that fits better. In the Samudra Manthana — the churning of the cosmic ocean — the devas and asuras are locked in a competition neither can win alone. They agree to collaborate, using Mount Mandara as the churning rod and the serpent Vasuki as the rope. The capability is shared. The tools are shared. The ocean is shared. For a long stretch of the churning, everything is symmetric. And then the amrit — the nectar of immortality — emerges, and the symmetry ends.

It does not end because the devas churn faster. It ends because Vishnu takes the form of Mohini and decides, by authority that sits outside the churning itself, who drinks and who does not. The game looked level all the way until the moment of distribution. The terms of distribution were set by a power the participants did not author.

This is a truer picture of the AI security ecosystem than the trading floor. Frontier capabilities are churned collectively — by labs, by regulators, by researchers, by attackers, by defenders — using a shared substrate of compute, data, and research. For a time, everything looks symmetric. Then capability is distributed, and it is distributed on terms set by actors whose incentives are not identical to any individual organization’s. Frontier labs decide who gets early access. Regulators decide what is permitted. Insurers decide what is underwritable. National AI safety institutes decide what is tested pre-deployment. The individual organization is a participant in the churning, not its orchestrator.

Two consequences follow, and both are central to AAISM’s view of what AI security management actually is.

The first is that the “level playing field” is an illusion maintained only until distribution. Organizations that believe capability will arrive to them on symmetric terms are, to borrow the mythological frame, asuras reaching for a cup handed to them by a figure whose intentions they have not examined. The AAISM discipline of third-party and supply chain risk management — often treated as an administrative topic — is in fact the discipline of not drinking from cups one has not inspected. The governance terms attached to an offer of capability matter as much as the capability itself. Compute credits are not free; preferential access is not neutral; early-mover partnerships create dependencies that shape future negotiating posture.

The second consequence is more humbling. The asuras did not lose because they were slower. They lost because they lacked the discernment to recognize the terms of distribution when they arrived. They saw Mohini and reached. The devas saw Vishnu and waited. The difference was not speed. It was disposition.

Equipoise Is Not Yet Governance

It is tempting, reading this, to conclude that the AI security manager’s task is to cultivate personal equipoise — a kind of stoic practice that resists the industry’s reflex toward unilateral action. And equipoise is indeed part of the discipline. The manager who moves slowly enough to think is already ahead of the manager who moves fast enough to appear decisive.

But equipoise is not governance. Equipoise is a disposition. Governance is the institutional architecture that makes disposition reproducible across people, decisions, and time. A stoic CISO is a single point of failure. A stoic CISO who has built a governance committee with documented decision rights, a risk appetite statement approved by the board, an escalation matrix tested in tabletop exercises, and an AI inventory maintained as a living artifact — that is an institution. The former retires; the latter persists.

The devas’ advantage in the Manthana, read carefully, was not merely that they were disciplined in the moment. It was that they had already accepted a covenant — the churning agreement itself — and operated inside a sanctioned framework. They trusted the framework to distribute outcomes. They did not attempt to seize the amrit when it emerged; they held their position and let the orchestrator act. That is not individual stoicism. That is operationalized discernment. That is governance.

This is the translation AAISM is asking of its candidates. The manual’s relentless emphasis on program thinking over project thinking, on governance committees over individual heroics, on voluntary framework adoption in advance of regulatory mandate, on risk owners rather than risk solvers, is not bureaucratic preference. It is the recognition that organizations, unlike individuals, cannot sustain equipoise through character alone. They sustain it through structure.

Vishnu Is a Lagging Indicator

One qualification sharpens the picture. In the current AI ecosystem, the Vishnu role — the authority that sets terms of distribution — is played by a coalition of forces: frontier labs, national AI safety institutes, sectoral regulators, insurance markets, and increasingly, legislatures. The EU AI Act is Vishnu-shaped. The US AISI pre-deployment testing arrangements are Vishnu-shaped. The emerging norms around responsible disclosure of AI capabilities are Vishnu-shaped.

But these orchestrating forces lag the capability they orchestrate. The EU AI Act took years to draft and will take years more to enforce. Sectoral AI guidance in healthcare, financial services, and employment is still emerging. Many frontier risks remain ungoverned by any statute. Organizations cannot wait for Vishnu to appear before they begin the churning. They have to begin, knowing the distribution will come, and prepare to be on the right side of it when it does.

This is why the AAISM Review Manual places such weight on voluntary frameworks. NIST AI RMF is explicitly voluntary. ISO/IEC 42001 is certifiable but not mandated. OECD AI Principles are aspirational. The mature organization adopts these as anticipatory governance — behaving now as if tomorrow’s regulation were already in force. This serves two purposes simultaneously. It manages risk that current regulation does not yet cover. And it earns the organization a seat at the table when the rules are being written, because regulators consistently draw on the practices of organizations that demonstrated discipline before discipline was required. The devas did not wait for Vishnu. They began the churning knowing he would be needed, and they were ready when he arrived.

The Quality of Participation

What emerges from this line of thinking is a claim about what AI security management actually is — a claim the AAISM body of knowledge asserts implicitly on every page, and one the exam tests relentlessly under the surface of its scenarios.

The claim is this: the individual organization cannot control the AI ecosystem, but it can control the quality of its own participation in the ecosystem, and governance is the practice of ensuring that participation is wise, proportionate, and defensible under uncertainty.

That is not a weak claim. It is not a retreat from ambition. It is the mature claim — the one that separates the AAISM holder from both the technologist who believes capability is destiny and the cynic who believes governance is theater. The manager does not win the capability race. The manager ensures that when capability arrives, the organization can receive it, evaluate it, authorize its use, and integrate it through governance that is already in place, rather than governance improvised under pressure.

There is a concept in the Bhagavad Gita that maps almost perfectly onto this posture — nishkama karma, action without attachment to outcome. The AAISM manager acts diligently, governs conscientiously, documents faithfully, escalates appropriately, and then releases attachment to whether the outcome is favorable, because the outcome is shaped by forces larger than the organization. What the manager is responsible for is the quality of the action itself. Was it risk-informed? Stakeholder-engaged? Framework-aligned? Proportionate to the actual risk rather than the perceived urgency? That is the only ground on which the manager stands. And it is, on every page of the manual and in every scenario on the exam, the ground ISACA is looking for.

The Exam Behind the Exam

There is an exam the candidate sits in a testing center. There is another exam the candidate sits every day on the job. Both reward the same disposition, which is why AAISM, done seriously, is not a credential so much as a training in how to think under a particular kind of pressure.

When a frontier capability arrives — real or rumored, verified or apocryphal — the instinct is to act. The discipline is to respond. Acting is technologist work: procure the tool, deploy the scanner, match the speed. Responding is manager’s work: reassess the threat landscape, update the risk register, brief the governance committee, evaluate the third-party terms, define authorized scope, identify the risk owner, align to the framework, and only then — with authority, with assurance, with proportion — decide.

The devas did not win by reaching first. They won by holding position long enough for the distribution to resolve in their favor. They had done the work before the amrit emerged. They had entered the churning under a covenant. They trusted the orchestration. They did not grasp.

This is what governance looks like when it matures past bureaucracy and becomes, as it must, a form of institutional wisdom. Not a brake on action. Not a layer of approval. But the practice, made reproducible, of knowing which cup is being offered, by whom, on what terms, and whether it is yours to drink.

The manager who understands this has already passed the exam behind the exam. The one in the testing center is a formality.

Written in the spirit of an ongoing conversation about what AI security management actually asks of those who practice it — grounded in the ISACA AAISM Review Manual, NIST AI RMF, ISO/IEC 42001, the EU AI Act, and the older wisdom that sometimes illuminates them better than contemporary vocabulary can.

ISACA and AAISM are registered trademarks of ISACA. This post is independent commentary and is not affiliated with or endorsed by ISACA.

Leave a comment